Introduction

Developers nowadays are no longer just coding. They are practically instructing applications to respond (and sometimes, make us sound dumber 😄). Welcome aboard the Large Language Models (LLMs) train, where your application can write emails, answer questions, create content and also debug code like no one else.

While, to a certain extent, integrating LLMs into applications had once been a luxury for software development teams, as the usage of these tools spreads, they will definitely become more and more a must-have.

Whether it is for chatbots, virtual assistants or intelligent automation tools, LLMs are enabling a new generation of human experience. Yet building with LLMs is not just using an API, it’s an approach that needs the right tools and knowledge.

In this practical developer guide, we’ll explore how to incorporate LLMs into your applications and build smarter solutions that can scale and be interactive.

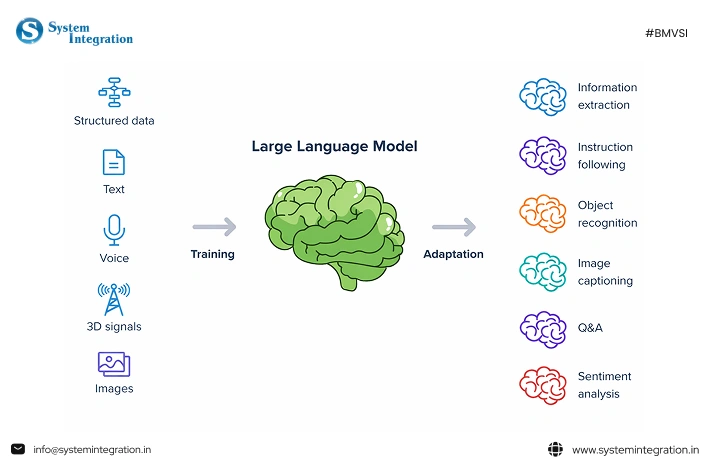

What Are Large Language Models (LLMs)?

Larger Language Models (LLMs) are sophisticated artificial intelligence systems that can comprehend, create, and communicate with human language. These models learn over massive amounts of text data (from books, websites, articles and other sources) patterns, grammar, context and the relationship between words.

LLMs are generally based on transformer deep learning architectures that enable processing and analyzing language at scale. Many of the AI applications we see today, such as chatbots, virtual assistants and intelligent search solutions, use popular foundation models such as GPT, Llama or Gemini.

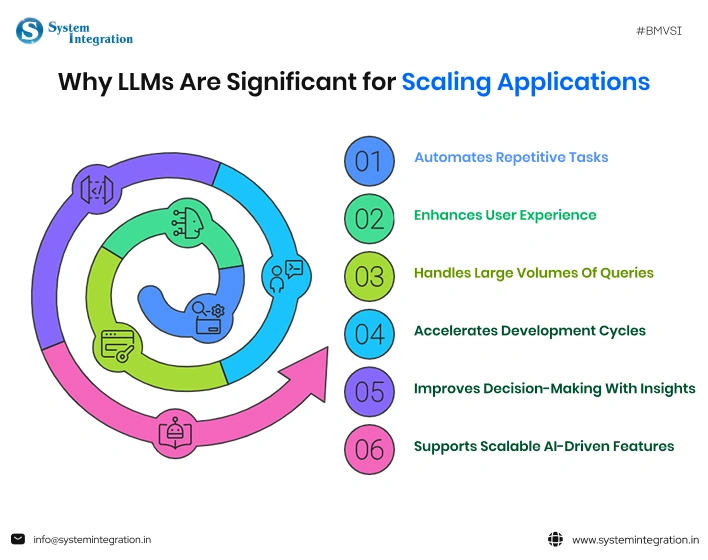

Why LLMs Matter for Scaling Applications?

- Automates repetitive tasks

LLMs automate customer support, content creation, and data processing, which leads to less manual work. - Enhances user experience

Applications can provide conversational interfaces and respond in real time. - Handles large volumes of queries

LLMs allow applications to handle thousands of user interactions at the same time. - Accelerates development cycles

This allows developers to rapidly prototype best-in-class intelligent features at scale (i.e., without the need to build sophisticated ML models from the ground up). - Improves decision-making with insights

Query from LLMs and analyzing large datasets, or give meaningful summaries and feedback. - Supports scalable AI-driven features

As the demand for applications increases, businesses can easily scale AI capabilities.

Popular LLMs and Platforms for Integration

➔ An overview of top LLM suppliers

Major players in the LLM in software development ecosystem are providing strong models for various purposes, and the ecosystem is changing quickly. With cutting-edge models renowned for their dependability and high performance, companies like OpenAI, Google DeepMind, and Anthropic dominate the proprietary market.

In the meantime, companies like EleutherAI and Meta make substantial contributions to open-source innovation, offering developers greater flexibility and deployment control.

➔ Proprietary versus open-source models

Developers must weigh convenience and flexibility when deciding between proprietary and open-source LLMs. Customisation, on-premise deployment, and cost control are all possible with open-source models, such as those from Meta, but managing them requires technical know-how.

Although they have usage costs and less transparency, proprietary models from companies like OpenAI or Anthropic offer better performance right out of the box, managed infrastructure, and ease of use.

➔ APIs and platforms (OpenAI, Hugging Face, etc.)

Thanks to APIs and developer platforms, modern LLM integration is primarily API driven, making previously complex models much more accessible. There are powerful APIs for chat/embeddings/fine-tuning from platforms like OpenAI, as well as massive repositories of pre-trained models and deployment tools in Hugging Face.

Cloud providers such as Microsoft Azure and Amazon Web Services add LLM capabilities to their ecosystems for practically scalable production-ready AI solutions.

| ✨ How to select the right model for your use case Choosing the appropriate LLM hinges on application needs, available budget, performance expectations and data sensitivity. Open-source models from Meta are better for custom, privacy-focused applications, while APIs from providers like OpenAI are perfect for quick deployment and high accuracy. |

Top Use Cases of Integrating LLM

- Customer Support Automation

- AI chatbots and virtual assistants automate the responses

- Answer FAQs, ticket resolution, customer queries in your off-hours

- Decreases reaction time and enhances end-user respect

- Helps audiences worldwide with their multilingual communications

- CRM systems integration for personalised support

- Content Generation and SEO Writing

- Writes blogs, articles and website copy in record time

- It provides the specific keywords that lead to optimising content for SEO

- Generates product descriptions and marketing copy at scale

- Helps rewrite, summarise, and improve readability

- Accelerates content automation workflows for marketing teams

- Code Assistance and Developer Tools

- Trained on data until October 2023

- Assists in diagnosing errors and enhancing the efficiency of code

- Generates code snippets and documentation

- Helps in learning new programming languages and frameworks

- The time to development is minimised, and developer productivity is improved

- Data Analysis and Summarisation

- Derives insights from large volumes of datasets/documents

- Condense long reports into simple information

- Enables automation of data analysis and insights

- Supervised learning: Converts unstructured data into structured formats

- Quick analytical results assist in decision-making

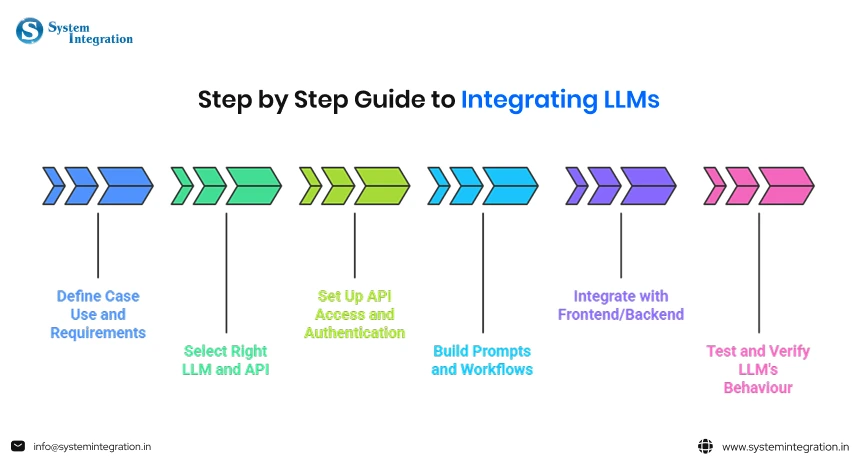

Step-by-Step Guide to Integrating LLMs

Define Your Case Use and Requirements

It might seem contradictory, but make sure to be crystal clear about what challenge you want to solve and how exactly AI will be beneficial before starting with an LLM.

- Determining the scope of your machine learning project

- Identify high-impact use cases, such as document summarisation

- Technical & architectural requirements, such as model selection

- Data privacy, security & compliance for the safety of sensitive data

Select the Right LLM and API

The right model type is critical for performance and scalability. Different providers like OpenAI, Anthropic and Hugging Face have varying capabilities, pricing and flexibility.

- Contrast in the manner of model capabilities (i.e., accuracy vs speed vs context length)

- Examine pricing and the cost of token usage

- Choose cloud APIs Vs self-hosted models

API access and authentication set up

After you choose a provider, it is time to set up API access. Usually, this consists of creating API keys, configuring an authentication process and defining environment variables. Create and safely keep API Keys.

- API Key Generation and Secure Storage

- Configure authentication headers in requests

- Set environment variables for security

- Watch out for API usage and rate limits

Build Prompts and Workflows

Since prompts sit between user input and LLM output, prompt design is a critical step.

- Design clear and specific prompts

- If you can afford it, provide examples for few-shot prompting

- Be robust to corner cases and fuzzy input

- Create workflows for multi-step tasks

Integrate with Frontend/Backend

Once you develop the LLM logic, plug it into your app stack. API calls and processing are usually done on the backend, with results being displayed to users by the frontend. Easier integration enables smoother interaction between users and a real-time response.

- Machine Learning Backend Services.

- Design user-friendly interfaces for interaction

- Manage async responses and loading states

- Ensure scalability and performance optimisation

Test and Iterate

Testing to verify the LLM behaves properly in practice is critical. Assess outputs, spot discrepancies, and iterate on prompts or flows accordingly.

- Test with diverse real-world inputs

- Analyse errors and refine prompts

- Monitor performance and response quality

- Constantly check and improve the system

Common Pitfalls in the LLM Application Roadmaps

Overestimating LLM Capabilities

Many teams start with the assumption that the LLM can deliver perfect, exact human-like intelligence. However, this model includes drawbacks, including bias and a lack of information relevant to a certain subject.

Ignoring Data Privacy

Inaccurate findings are frequently produced when general-purpose LLMs are used exclusively without being modified for a particular domain. Skipping the data accuracy and its steps to protection can lead to serious consequences.

Building without a feedback mechanism

An LLM product is never truly done; it changes with every user interaction. Launching it without a feedback loop for capturing real-world data usage and user satisfaction can lead to stagnation.

Poor integration with existing systems

LLMs rarely operate in isolation. Neglecting to design for interaction with existing systems or software infrastructure and APIs is a typical error. As a result, there may be bottlenecks or a bad user experience.

Bias concerns

LLM goods may inadvertently reinforce negative preconceptions that bias and unfairness are not addressed at an early stage. As a result, the roadmap should include responsible AI practice.

Conclusion

Whether it is for chatbots, virtual assistants or intelligent automation tools, LLMs are enabling a new generation of human experience. Yet building with LLMs is not just using an API, it’s an approach that needs the right tools and knowledge. We have walked you through the practical guide step by step on how to integrate LLM in applications.

For the best AI integrations services, partner with the best AI software development company that has a team of experts who can assist you throughout the journey and help you build a scalable application.

FAQs

here are the main benefits of integrating an LLM into software

- Accelerated development speed

- Enhanced customer services

- Improved analytics

- 24/7 automated support

It involves selecting an AI model, setting up API access or hosting, designing prompt logic, and handling data securely. Key steps include using SDKs, implementing retrieval-augmented generated (RAG) for context, and employing frameworks.

Retrieval-Augmented Generation(RAG) is an AI framework that improves the accuracy and relevance of large language models(LLMs) by connecting them to external, authoritative knowledge bases before generating a response.

For this objective, implement input/output validation layers to check for personally identifiable information(PII) or harmful content. Never expose API keys on the client side.