Introduction

We all know how every AI project begins. It’s decided in a meeting, and everyone agrees, ‘Let’s build an AI application. Some days later, there’s some demo, and everyone treats the AI MVP like it’s going to be the next big thing in tech😅.

And then reality steps in, hitting you on the head like a brick. Suddenly, your model fails with real data, servers start failing under load and scaling the product feels like an impossible thing, but you still have to do it anyway. This is the stage in which many AI experiments either mature into robust products or fade quietly in this increasing competition.

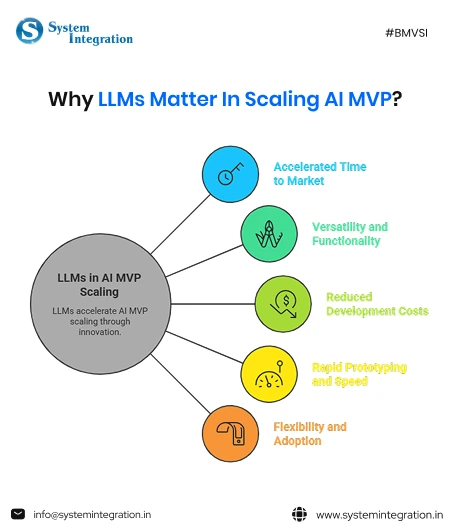

However, building a scalable AI application entails much more than a working model. It consists of factors like intelligent data pipelines, stable architecture, continuous model optimization and clear product vision. In this blog, we will look at how businesses can evolve a basic AI MVP to build a scalable production-grade AI product that drives real value.

What is an AI MVP?

“A minimum value product (MVP) is the simplest version of a product or feature that enables a team to assess whether users will derive meaningful value from that product.”

An MVP is essentially an experiment designed to gather feedback and determine whether an idea has the potential to succeed in the market before investing in a full-scale solution. In the initial stage of mobile app development, creating an MVP assists firms in testing their concept more quickly and reducing risks.

Today, the usability of an MVP is just as important as its functionality. If an MVP is difficult to use, users may skip it altogether, not because the idea lacks inherent value, but because the design feels difficult to navigate.

Its key characteristics

- Focus on core functionality: it only includes the features needed to solve a specific problem, avoiding unnecessary complexity.

- Rapid development and feedback: it can be built in days or in weeks, and it provides instant feedback and testing to gather user data.

- AI-specific elements: often include data cleaning, utilising pre-trained models, or employing human-in-the-loop approaches to ensure reliability in the initial stages.

Why is it Challenging to Scale an AI MVP?

1. Emerging bottlenecks in distributed AI workloads

When your AI MVP is scaled across one or more systems, performance bottlenecks generally arise. Operations such as processing large data sets, synchronizing models and managing compute resources can slow down the process of developing an AI system and adversely affect real-time AI performance.

2. Cross-cloud data transfer latency in hybrid environments

AI applications typically run in hybrid or multi-cloud environments. Transferring huge amounts of training and inference data across cloud platforms can add latency, prolonging response time and degrading overall system efficiency.

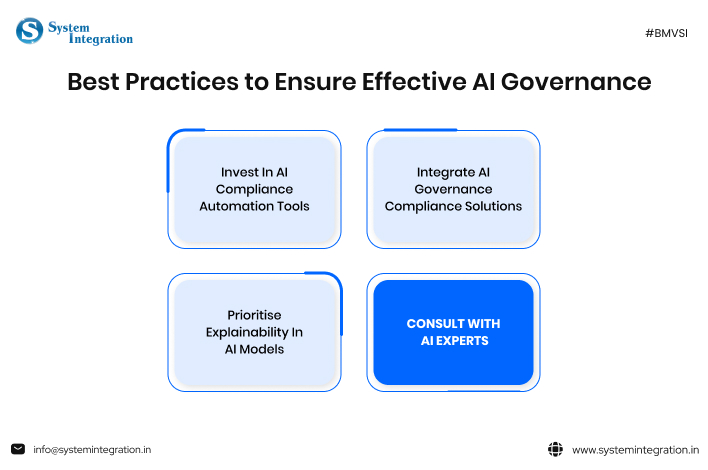

3. AI governance and compliance across jurisdictions

As AI products are distributed all over the world, organisations will have to comply with various data protection and AI governance regulations. It is becoming crucial for companies to manage data privacy, ethical AI usage, how they will use the technology and where to disclose it.

4. Complexity in AI model versioning and dependency control

To scale AI applications, you must manage lots of different model versions as well as their associated datasets and dependencies. If you do not have adequate version control and tracking systems in place, it will be exceedingly difficult for developers to maintain model consistency, reproducibility, and reliable deployment of the model.

Top Technologies for Building a Robust AI Application

➔ Machine Learning Frameworks

Frameworks such as TensorFlow, PyTorch, and Scikit-learn assist developers in efficiently constructing, training, and optimizing AI models. They offer robust libraries, along with scalability and versatility to build smart solutions across the domain.

➔ Cloud Computing Platforms

Amazon Web Services (AWS), Microsoft Azure and Google Cloud are among the cloud platforms that offer scalable infrastructure for their AI workloads. They provide GPU computing, data storage, and AI software services that facilitate training and deploying large-scale models.

➔ Data Engineering and Processing Tools

For example, Apache Spark offers the ability to process large data sets in-memory and allows for complex analytics on such datasets; Kafka does well at serving as a queuing mechanism, while Airflow helps automate workflows and ETL processes. Reliable and continually updated information is delivered to AI applications.

➔ Containerization and Orchestration Tools

Technologies such as Docker and Kubernetes assist in the packaging of AI applications & management of multiple deployments across environments. They help with scalable infrastructure, easy-to-manage applications, and consistent performance in production systems.

➔ AI APIs and Integration Platforms

AI APIs and integration platforms empower developers to incorporate AI features into applications rapidly. These systems allow us to easily integrate AI models with our existing systems, creating automation, intelligent insights, and seamless capabilities.

➔ Monitoring and MLOps Tools

Model Monitoring & MLOps tools monitor model accuracy, data drift and automate renewal of models. Tools such as MLflow and Kubeflow increase reliability, manage model versions, and enable continuous improvement in production settings.

Best Practice for Building Scalable AI Applications

- Always Begin with a Clear Business Problem

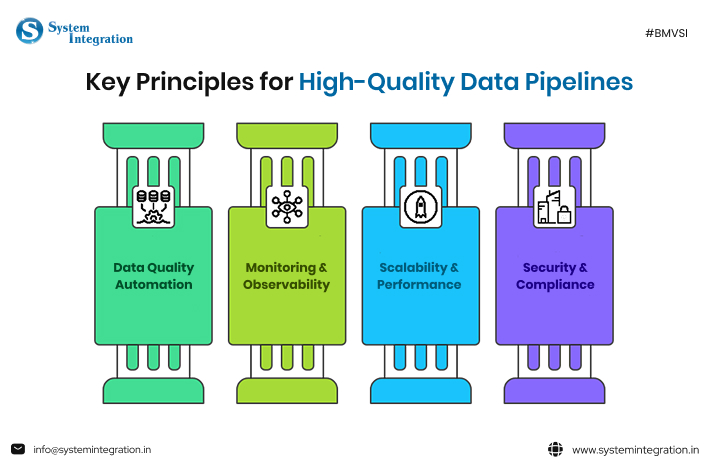

Define the exact problem your AI application will be solving. When planning AI mobile app services, make sure that the AI capabilities are aligned with your business goals so that they add measurable value in terms of tangible solutions to real end, operational issues. - Build High-Quality Data Pipelines

Establish pipelines for data collection, cleaning, labelling, and processing. Having consistent, well-structured data ensures that models are trained properly, predictions are accurate, and it becomes easier to scale AI applications.

- Use Scalable Cloud Infrastructure

Utilize cloud infrastructures to provide computing, data storage and AI workloads management. A scalable infrastructure makes sure that your application can manage growing data volumes and user load without performance problems. - Implement MLOps for Continuous Deployment

Use MLOps processes to automate model training, testing, deployment, and monitoring. This process allows you to deliver updates more quickly, increases inter-team collaboration, and assures consistent AI performance in production environments. - Emphasis on Model Monitoring & Optimization

Monitor and log model accuracy, latency and real-world performance. Data drift can often be detected through continuous monitoring, informing the retraining of data and optimisation of where a model deploys to ensure consistent AI performance. - Ensure Security and Compliance

Following regulatory standards, implementing strong security policies and protecting sensitive data. Responsible AI governance not only preserves user trust but ensures that you’re compliant with developing data protection and privacy laws.

Future Trends in Building AI Applications

1. AI Agents and Autonomous Systems – More AI applications will deploy intelligent agents that can automate multi-staged workflows, reason over data, make decisions, and interact with multiple systems independently without needing constant human oversight.

2. Edge AI and In-Device Processing – More AI models will be executed on devices like sensors, smartphones & other IoT systems to provide rapid decision-making without data hosting in the cloud.

3. Responsible and Explainable AI – businesses will focus on explainability of the model, and how transparent it is, while they make decisions considering ethical & Regulatory Compliance

4. Low-Code and No-Code AI Platforms – Development platforms that enable businesses to build AI applications faster with minimal coding, opening up the availability of these applications to non-technical business teams.

5. AI-Driven Automation Across Industries – The continued transformation of industries via automation

Bottom Line

Scaling AI applications is more than just adding power. It’s about building intelligent, ethical, and efficient systems that can evolve with your business. By focusing on enhancing operational performance through AI, you can drive both immediate effect and long-term success of your AI application.

However, for this objective, you need to partner with the best AI software development company that is proficient in making your AI infrastructure more agile, ethical and future-ready in the competitive market.

FAQs

An AI MVP (Minimum Viable Product) is a rudimentary version of an AI application with minimum essential features to prove core concept validation. This allows businesses to test feasibility, collect feedback and iterate on the solution before building a complete AI product.

To scale an AI MVP, businesses should focus on creating robust data pipelines and adopting scalable cloud infrastructure, relying on MLOps practices, and monitoring model performance in real-time to adjust accordingly.

Some widespread challenges involve working with massive datasets, retaining accuracy in AI models, the costs associated with infrastructure setup and usage, securing sensitive data that is involved in machine learning operations and moving into production as a whole in heterogeneous environments.

Some of the popular technologies and frameworks include TensorFlow, PyTorch, AWS, Azure for model training; Docker, Kubernetes for containerization and MLOps Paltforms e.g. comet.ml for monitoring and deployment.